1.3 KiB

1.3 KiB

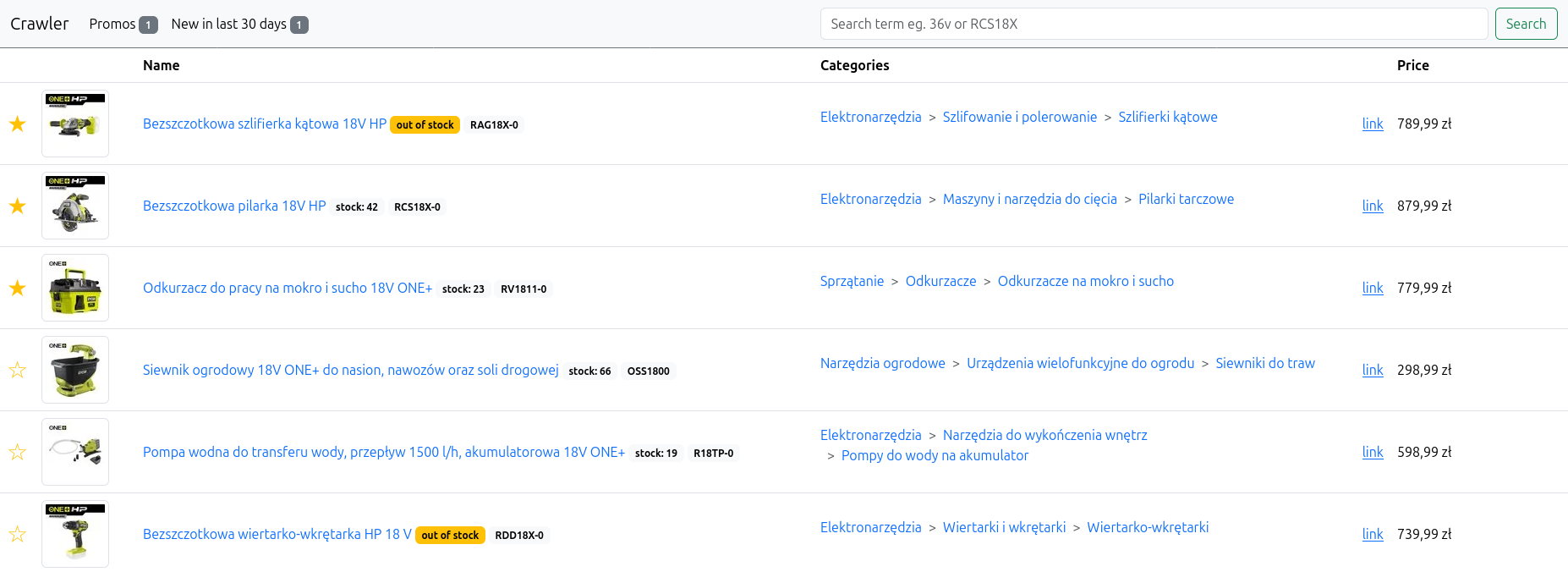

ryobi-crawler

Project start

- Clone repository using

git clone https://git.techtube.pl/krzysiej/ryobi-crawler.git - Cd into project directory

cd ryobi-crawler - Build and start docker container

docker compose up -d - Run

docker compose exec php-app php console.php app:migratefile to createdatabase.sqliteand create tables. - Run

docker compose exec php-app php console.php app:scrapecommand to scrape all the products from the ryobi website. - Access web interface using

localhost:9001address in web browser.

Update project

- Cd into project directory

- Run

git pull - Refresh cache on production by removing cache directory:

rm -rf var/cache - Start and build image in one go with command:

docker compose up -d --build --force-recreate

Bonus

Install composer package

- Run

bin/composer require vendor/package-name

Running Cron

For now only way of running app:scrape command on schedule is to use host crontab.

- Run

crontab -ecommand to edit a host crontab job file - Add a new line with e.g. line like this

0 1 * * * cd /var/project/directory/ && docker compose exec php-app php console.php app:scrape - Save and exit file editor. Cron will execute

app:scrapeonce per day.